Language learning (acquisition): what is it?

- Presented with a sequence of grammatical sentences (or words),

be able to recognize novel sentences (or words) as

grammatical or ungrammatical.

- Presented with several instances of a word in context, be able to

interpret or produce that word appropriately in a new context.

- Presented with grammatical sentences in context,

be able to interpret or produce novel sentences in new contexts.

- Presented with sentences (words), learn to find the boundaries between the units

(words, morphemes).

Language learning: why is it hard?

- Language learners receive a finite amount of sometimes errorful

input (the "poverty of the stimulus" argument).

- Language learning is mostly unsupervised.

- Learners receive positive evidence, but no (direct) negative evidence.

- Learning is sometimes very rapid.

Kinds of information

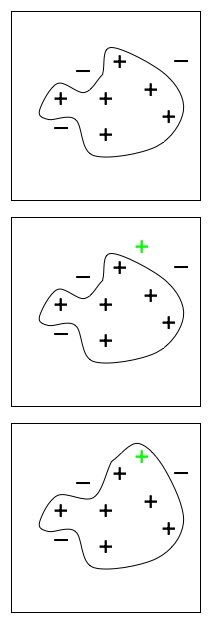

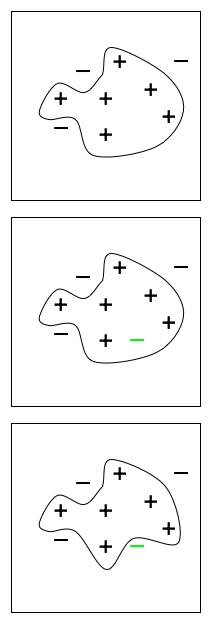

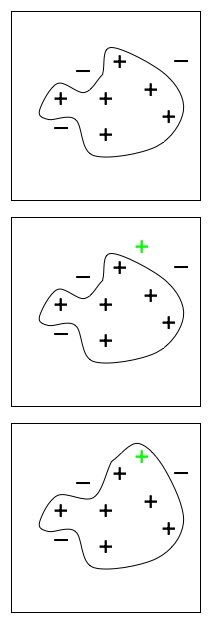

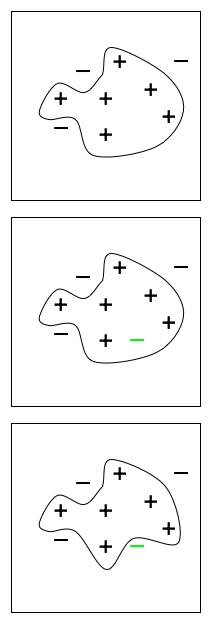

- Two kinds of evidence in learning

| Positive evidence | Negative evidence |

|---|

|

|

- Positive evidence

- Information about what is correct

- Causes the system to extend its hypothesis

- Negative evidence

- Information about what is not correct

- Explicit, labeled incorrect patterns

- Correction for the system's errors

- Information that there is an error somewhere, as in reinforcement learning

- Indirect (implicit) negative evidence: non-occurrence of something that would

otherwise be expected; useful in generative (Bayesian) learning models

- Active learning: learner asks about questionable

utterances, receiving useful negative feedback

- Causes the system to restrict its hypothesis

- Without negative evidence, is language learning possible?

- Alternatives to negative evidence: constraints

- Built-in (innate) constraints

- Constraints from the environment (input)

The problem for word meaning

- Is word learning supervised?

- Is negative evidence available?

- Quine's problem

- Figuring out what the referent of a novel word is

- Figuring out what aspects of the referent a novel word refers to

- Which word in an utterance goes with which part of a scene?

- How learning might be constrained

- Constraints on inter-lexicon relationships: mutual

exclusivity

- Constraints on kinds of categories (meanings)

- Whole object

- Taxonomic vs. thematic relations

- "Dimensional" (attentional) biases: shape, material;

language-specific biases (L. Smith, Bowerman, etc.)

-

Syntactic bootstrapping (Gleitman, etc.):

guess the meaning of new words on the basis of the meanings of words that

occur in similar syntactic patterns

Lois gorped the brick to Clark.

- Constraints from non-linguistic behavior

The problem for grammar

- Learning English passive

- The girl is hugging the woman.

The woman is being hugged (by the girl).

- Chuck chopped the onions.

The onions were chopped (by Chuck).

-

Lois became a lawyer.

*A lawyer was become (by Lois).

-

Semantic bootstrapping (Pinker): use (built-in) semantic features to constrain the kinds of syntactic patterns that are possible

- Grammatical parameters (Chomsky, etc.)

Innatist vs. empiricist theories

- What could be innate

- General purpose mechanisms (not specific to language)

- Language-specific mechanisms

- Categories (noun, agent, etc.)

- Parameters: set on the basis of input

- The importance of the input

- How impoverished is it?

- How does it change with the competence of the child?

- How variable is it (within and between languages)?

- How do children learning different languages differ?

- What statistical regularities does it embody?

- Empiricist possibilities

- The role of the environment: embodied models